Documentation, attribution, and skepticism as clinical safety tools

Defensive medicine used to mean ordering one more test, writing one more line in the note, or documenting that risks were discussed “in detail.” That definition no longer holds. In the AI era, defensive medicine is less about doing more, and more about how decisions were made, who contributed to them, and how uncertainty was handled once artificial intelligence entered the workflow.

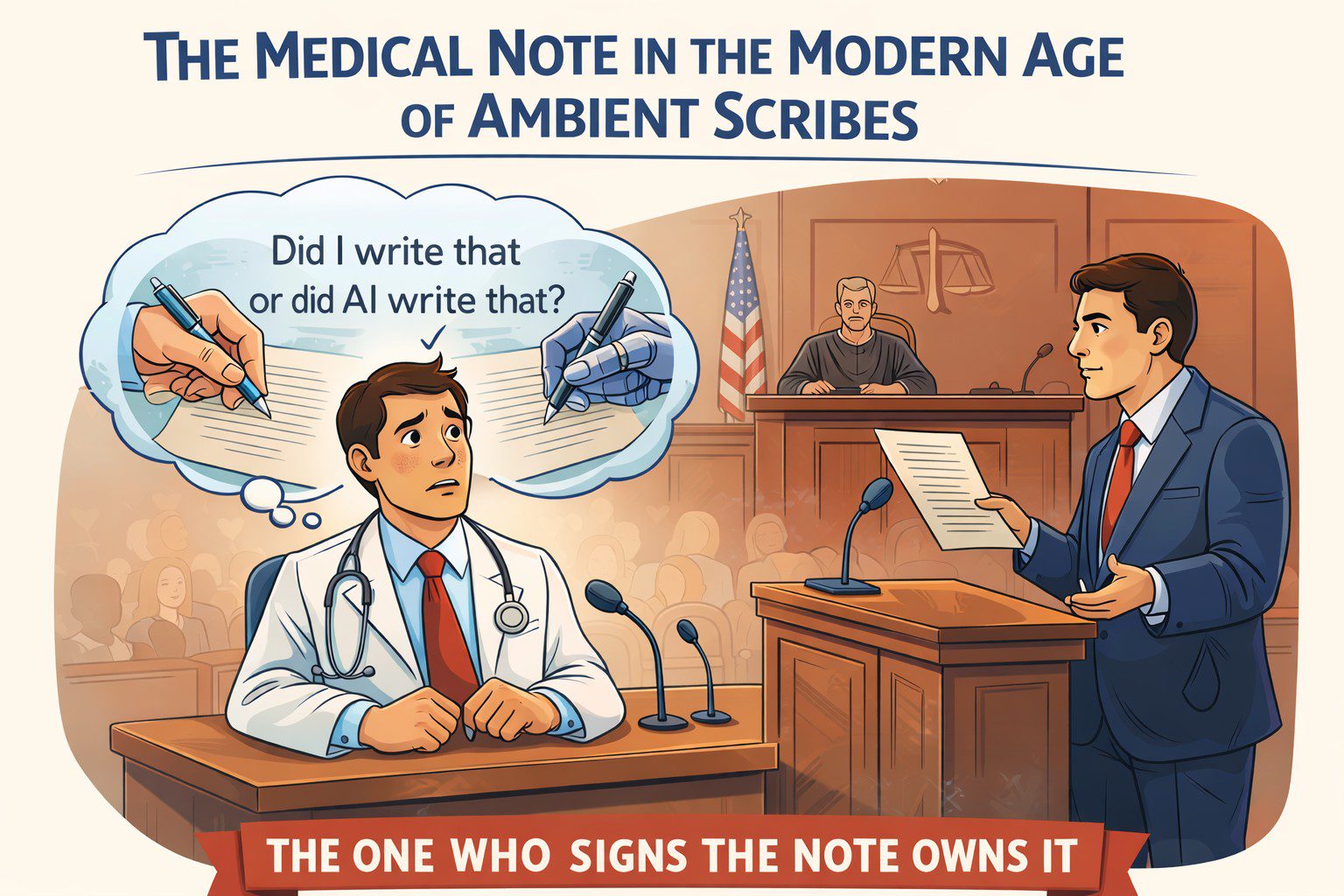

The medical record is no longer written by a single clinician at the end of a long shift. It is increasingly co-authored by ambient scribes, summarization engines, clinical decision support tools, and large language models that can sound confident even when they are wrong.

Here is the legal reality clinicians need to absorb early: When AI enters the chart, responsibility does not shift. It concentrates.

A shared workspace, personal liability

Most clinicians already use AI, whether they call it that or not. Ambient documentation, auto-generated assessments, triage tools, record summarization, and literature synthesis are now routine. These tools reduce friction and save time. But they also introduce a new medico-legal problem:

If an AI-generated statement is wrong and it appears in the chart, who owns it?

The law has been consistent so far. The signer owns the note. Courts do not meaningfully distinguish between human-authored and AI-assisted documentation. The medical record remains a clinician’s representation of reality, regardless of how the text was produced. That alone should change how we document.

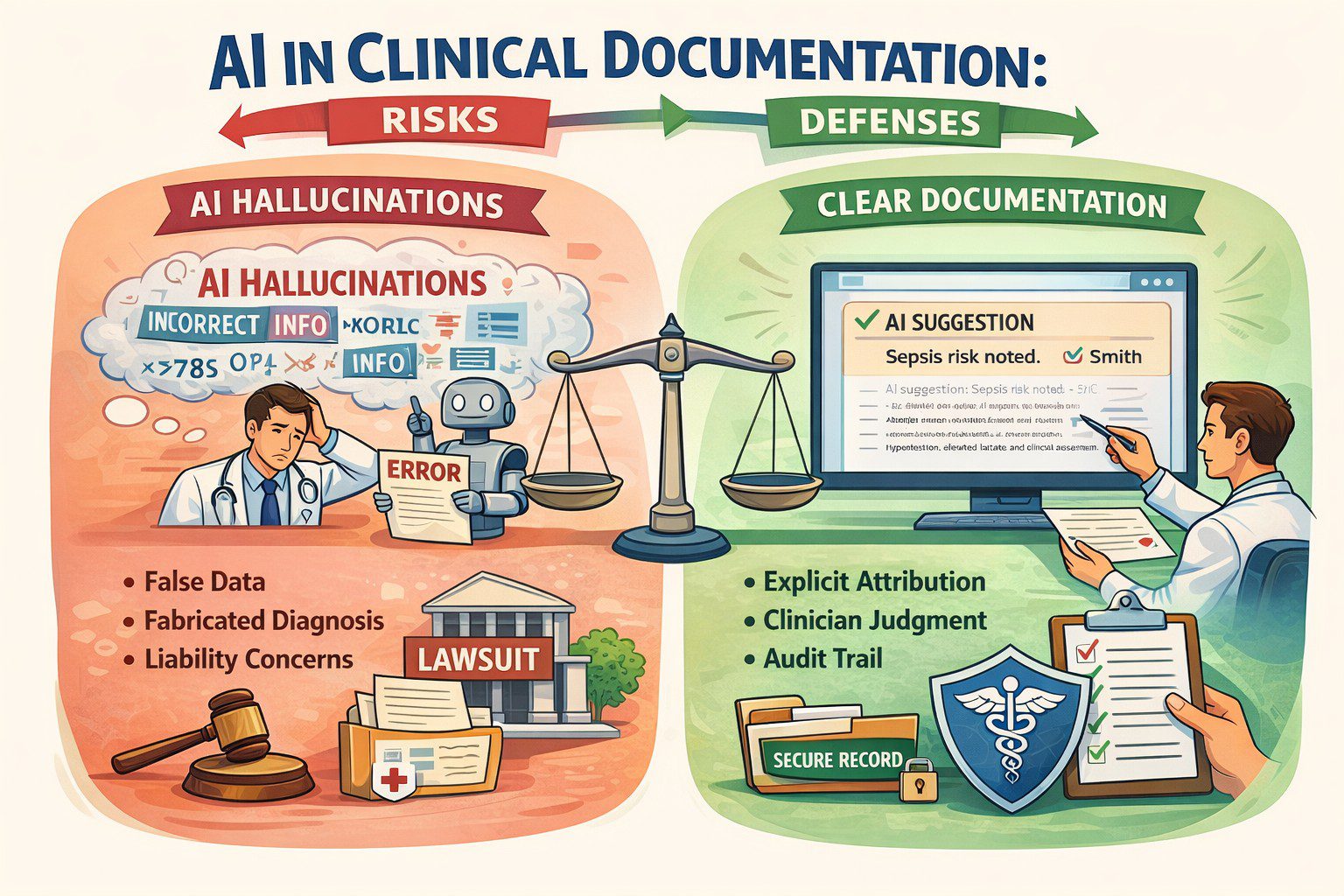

Hallucinations are a feature, not a bug

One of the most dangerous myths in healthcare AI is the belief that hallucinations are technical glitches that will be fixed with better models. They will not. Hallucinations are a structural feature of large language models. These systems do not retrieve truth. They generate statistically plausible language based on patterns in prior data.

This behaviour is not accidental. Models are rewarded for producing answers, not for saying “I don’t know.” In fact, any AI model that claims over 75 percent accuracy in a complex system shouldn’t be trusted. It’s probably learning the wrong thing very well.

Language models amplify this risk. They sound authoritative. They write cleanly. They can fabricate references, guidelines, or reasoning unless the reader already knows the answer. In healthcare, fluency without grounding is not neutral. It is dangerous.

Accuracy is not trustworthiness

We are repeatedly told to trust AI because it is “95 percent accurate.” However:

- Accuracy is not safety.

- Accuracy can hide bias.

- Accuracy can ignore uncertainty.

- Accuracy can collapse under distribution shifts.

In medicine, what matters is not how often a model is right in aggregate, but how it fails, when it fails, and whether humans can detect that failure in time. Clean metrics do not equal resilient performance.

“Who (or what) said what” now matters

When AI contributes to clinical reasoning or documentation, attribution becomes a safety tool. Attribution documents judgment. It shows that AI assisted but did not replace reasoning. Years later, that distinction can be very important.

The standard of care is already shifting

Clinicians may be exposed when they use AI and override it, especially after adverse outcomes. This tension has been described as the negative outcome penalty paradox: clinicians can be punished whether they follow or reject AI recommendations once AI becomes normalized. Defensive medicine now requires reasoned positioning, not blind adoption or avoidance.

Defensive documentation needs a major redesign

Traditional defensive documentation emphasized thoroughness; AI-era defensive documentation emphasizes provenance. Practical shifts clinicians should adopt now:

- Label AI-assisted content explicitly.

- Avoid pasting AI output without review.

- Document when AI recommendations were rejected and why.

- Be cautious with AI-generated citations and guidelines.

- Preserve clinician reasoning, not just summaries.

Training clinicians is now a legal issue

Clinicians do not need to become data scientists, but they do need to understand where hallucinations are most likely, how training data limitations affect output, and why confidence does not equal correctness. Ignorance will not be a defense when AI tools are widely available and increasingly normalized.

Augmented intelligence is the only defensible frame

Augmented intelligence keeps the clinician explicitly in the loop. It reinforces that AI assists but does not decide. The moment AI silently replaces reasoning in documentation, both patient safety and legal defensibility erode. The chart reclaims its original role: a legal narrative of clinical reasoning under uncertainty.

About the author

Dr. Hassan Bencheqroun is a pulmonary and critical care physician, assistant professor at the University of California Riverside School of Medicine, and CEO of Medical AI Academy. He hosts “The AI-Ready Doctor podcast” and is an active AiMed participant and speaker bridging clinical care, education, and technology.